Geospatial Intelligence for Grid Resilience

The shift from mapping to decision-grade Intelligence

Why Geospatial Intelligence Matters Now

What if the most critical infrastructure we rely on every day – our power grid – is struggling to keep up with the demands of a volatile climate and electricity growth? In the United States, the electric grid is increasingly strained by everything from wildfire shutoffs and hurricanes to extreme heat and a rapidly growing backlog of energy projects waiting to connect. The Department of Energy, FERC, and state regulators are under pressure to provide affordable and safe electricity while also maintaining reliability.

In this context, geospatial intelligence is no longer just a mapping exercise. It has become a critical enabler of decisions about where and how to build, protect, and manage the systems that keep the lights on. At the same time, advances in remote sensing, earth observation, and spatial analytics have created an unprecedented stream of data about the physical world. Satellites, aerial imagery, lidar, and sensor networks are generating high-resolution, near-real-time views of land, vegetation, and infrastructure. Combined with advances in cloud computing and machine learning, this data can be turned into decision-grade intelligence that helps utilities and governments manage climate risk. The shift underway is not about replacing maps with better maps. It is about embedding location-based intelligence directly into the workflows of grid planning, permitting, risk assessment, and resilience investment.

From Mapping to Infrastructure Decisions

The leap from traditional mapping to decision support is best understood in the power sector. Transmission and distribution planning depends on location-specific constraints. Transmission siting requires detailed knowledge of terrain, environmental restrictions, and community exposure. Distribution hosting capacity depends on spatially granular data about feeders, substations, and demand growth. Wildfire mitigation requires accurate vegetation and ignition risk mapping. Flood resilience depends on understanding elevation, drainage, and critical asset location.

These needs are converging at a moment when regulators are raising the bar for resilience planning. FERC Order 2023 has restructured the interconnection process to accelerate studies and reduce delays, but it has also heightened the need for transparent, data-driven siting decisions. State commissions are demanding that utilities produce resilience plans that quantify physical risks, justify investment priorities, and show clear benefits for ratepayers. DOE resilience funding under the Infrastructure Investment and Jobs Act and the Inflation Reduction Act is tied to evidence of hazard exposure and community impact. All these policy drivers converge on one theme: utilities and regulators require geospatially grounded intelligence to make defensible, auditable decisions.

The Competitive Landscape

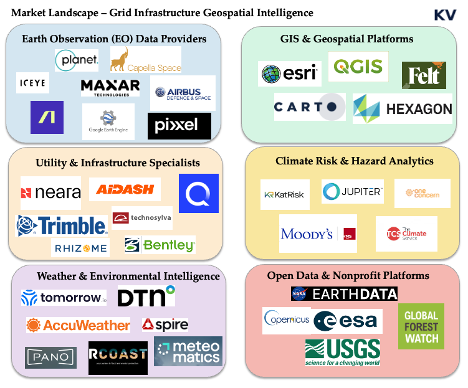

The field of geospatial intelligence has grown crowded, but most companies fall into recognizable categories. Each category plays an important role, yet none fully meets the specific demands of grid resilience.

Earth observation providers such as Planet, Maxar, ICEYE, Capella, Google Earth, and Airbus supply the raw imagery that underpins much of the sector. They deliver exquisite resolution and increasingly fast revisit rates, but they remain upstream in the value chain. Their core business is selling pixels, not delivering workflow ready risk intelligence to utilities.

GIS incumbents such as ESRI and the open source QGIS ecosystem dominate enterprise mapping. They are powerful platforms that have trained entire generations of planners, yet they are heavy and often difficult to adapt to specialized resilience use cases. They also require substantial expertise to deploy, limiting their reach to organizations with large in-house teams.

Cloud native mapping startups such as Felt and Atlas are trying to reimagine the GIS experience by making it more collaborative and accessible. Their strength lies in speed and usability, but they are still generic platforms. They lack the domain depth needed for transmission planning, wildfire mitigation, or hosting capacity analysis.

Climate risk analytics firms such as Jupiter Intelligence, One Concern, and Moody’s RMS focus on physical hazard modeling. They excel at projecting the financial implications of climate risk for insurers and banks. Their products are influential in capital markets, but they do not integrate directly into the operational decisions of utilities that must decide how to reroute a line or prioritize vegetation management.

Weather intelligence companies such as Tomorrow.io, Spire, and Meteomatics provide high resolution forecasts and reanalysis. They are increasingly sophisticated, but their output is temporal rather than spatially operationalized. They tell us what the weather is likely to be, but not how a specific feeder line in a wildfire zone should be managed.

Finally, utility and infrastructure specialists such as AiDash, Neara, Technosylva, Trimble, and Bentley provide focused tools for vegetation management, digital twins, wildfire spread modeling, and infrastructure design. They are closest to the needs of utilities, but they are often narrow in scope, expensive to deploy, and difficult to integrate into broader resilience and planning workflows.

The market is crowded with strong but partial solutions. Earth observation providers sell exquisite pixels but stop upstream of utility workflows. GIS incumbents are powerful foundations but require expertise and services to reach resilience use cases. Cloud native mappers are fast and accessible but remain generic canvases. Climate risk firms excel at hazard and finance, weather vendors excel at forecast delivery, and utility specialists deliver depth for narrow problems with heavier deployments. Taken together, the pattern is clear enough to act on. Incumbents are powerful but general, newer tools are agile but thin for utility-grade work, and buyers still stitch systems together to move from a map to a decision.

Why Power Systems Are the Demand Center

Although geospatial intelligence has applications in agriculture, real estate, insurance, and defense, the power system is where demand is most urgent and most willing to pay. The grid is simultaneously the backbone of the energy transition and the front line of climate impacts. In addition, the rise in the use and development of artificial intelligence (AI) and the digital infrastructure which supports it means that U.S. needs more energy than ever. Utilities, independent system operators, and regulators face three interlinked challenges.

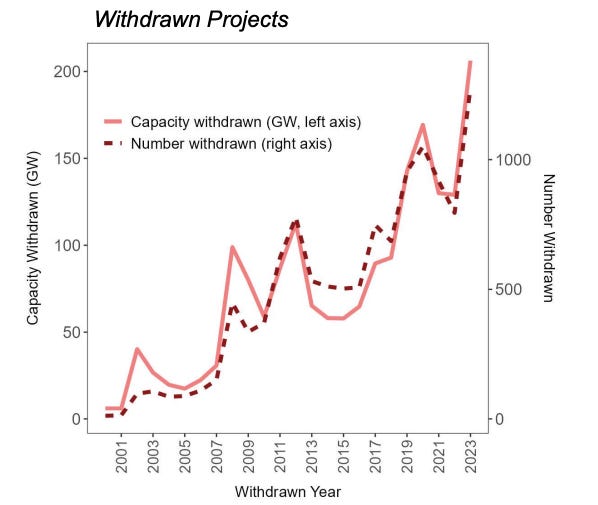

The first is accelerating interconnection and siting. With gigawatts of renewable projects stuck in interconnection queues, better spatial intelligence can shorten timelines, reduce disputes, and clarify upgrade needs. This delay cost ratepayers over $20.8 billion in 2022 and leading to vast number of projects (80%) dropping out from the queue. Figure 2 shows the number of projects and capacity withdrawn from the interconnection queue. One startup, Grid8 which aims to use advanced modeling and AI to streamline and accelerate the process of connecting to the electric grid recently created a dashboard which shows all the projects on the interconnection queue across the United States - for free!

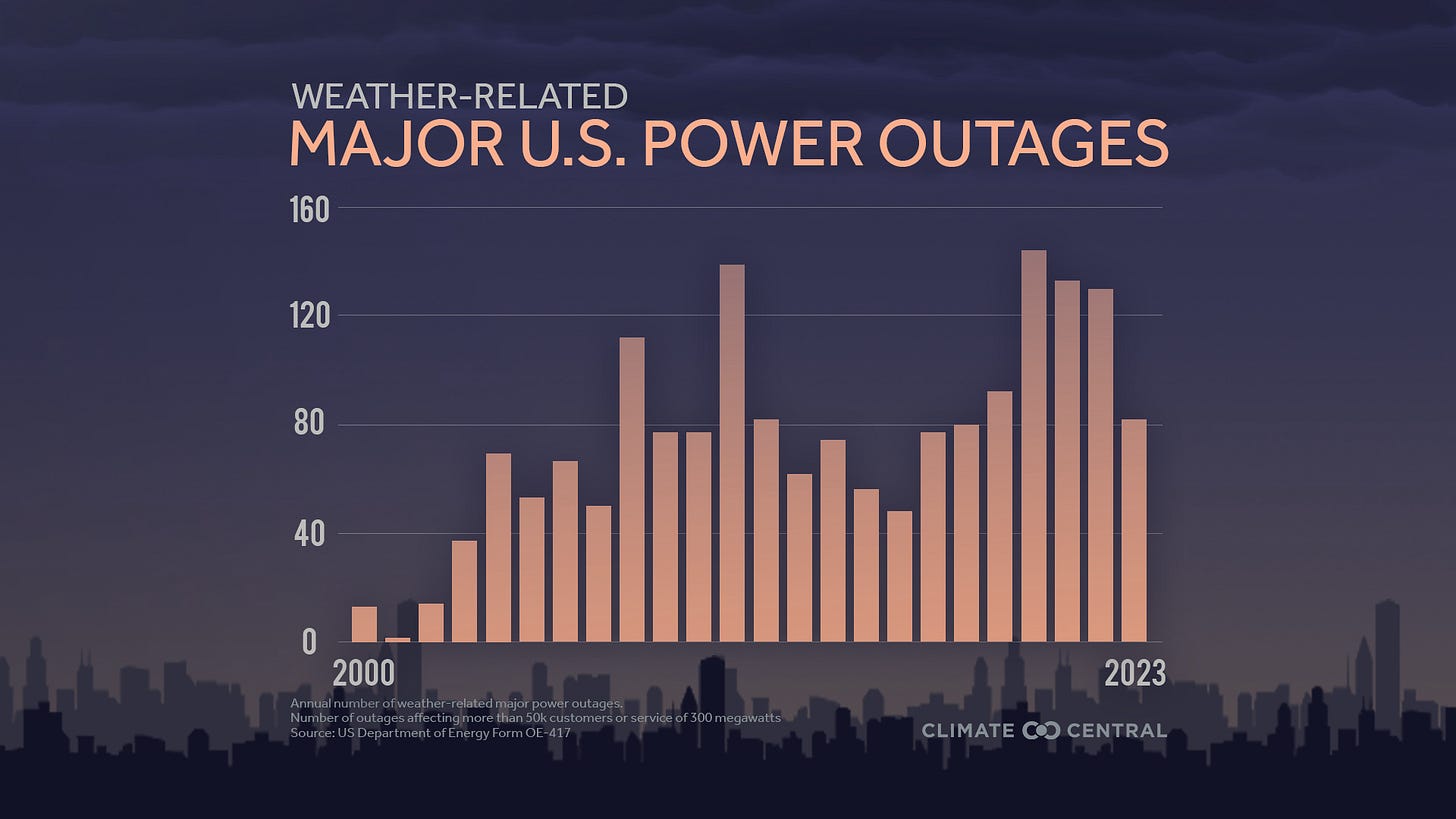

The second is reducing hazard-driven outages. Wildfire-related Public Safety Power Shutoffs in California, hurricane-related outages in the Southeast, and flooding in the Midwest have all demonstrated that utilities need spatial intelligence to anticipate and mitigate events before they force service cuts. According to Climate Central, of all the power outages in the US between 2000-2023, as shown in Figure 3, 80% of them were caused by extreme weather conditions.

The third is producing regulator-ready resilience filings. Commissions and federal agencies are asking for detailed, auditable resilience plans. These require geospatially explicit evidence of hazard exposure, cost effectiveness, and equity impacts.

In all three cases, geospatial intelligence is not a nice-to-have. It is the evidentiary backbone for decisions that determine billions of dollars of investment and millions of customer outcomes.

Market gap: Where the white space is and how to win

Despite the crowded landscape, several white spaces remain. The most important is integrated grid access and interconnection intelligence. Developers and utilities alike lack tools that combine hosting capacity, asset health, locational marginal prices, and large load tariffs into a single siting and interconnection dashboard. The result is a fragmented process that delays clean energy deployment.

The unmet need is a product that combines three qualities at once. It must be vertically specialized for grid outcomes, fully integrated into the systems where decisions are made, and simple to adopt. Specialization means hosting capacity screens, interconnection cost and timeline signals, vegetation and ignition risk, flood depth at asset elevation, and tariff and large load tariff context that is ready for an engineer and a regulator. Integration means connectors to GIS, the asset registry, work management, and to control and outage systems, plus exports that land cleanly in filings and approvals. Ease of adoption means a cloud service, a short path to the first result, bundled data where licensing allows, clear model notes, and weeks not quarters to value. All these at less cost.

The practical route to this product is to start with one burning use case and expand from there. Begin with interconnection efficiency and intelligence or compound hazard operations. Ship the full workflow end-to-end, including the data bundle, the validation notes, and the evidence pack for approvals. Only then add modules for adjacent jobs such as vegetation planning, flood hardening, or tariff-aware siting. Keep services light by templating integrations and by publishing a stable set of application programming interfaces. The product should feel like a working surface for operators and developers rather than a toolkit that demands a large internal team.

What ‘good’ looks like

To address these challenges, the next generation of geospatial solutions must be specialized, integrated, and easy to adopt, benefiting all utilities while serving a key market opportunity among smaller players. Here are some, but not limited to, key market gaps and what a "good" solution looks like for the entire industry:

1. Lightweight digital twins: While large investor-owned utilities (IOUs) can afford sophisticated modeling platforms, there is a need for simpler, cloud-based solutions that can provide actionable insights for all entities. This is an important opportunity, as these solutions could serve smaller municipal utilities and cooperatives who lack the resources for more expensive systems.

2. Compound hazard dashboards: All utilities need tools that can model the interactions among different hazards. While utilities often assess risks like hurricanes, tornadoes, wildfire, flooding, and extreme heat separately, the real danger comes from how these hazards interact with each other.

3. Permitting and route-risk intelligence: Transmission projects are often delayed by environmental and community disputes. Spatial tools that highlight environmental constraints and hazard exposure along potential corridors can help the entire industry identify "least-conflict routes" and reduce litigation.

4. Vegetation managment and ignition risk tools: The need to manage vegetation and ignition risk is a universal concern for all utilities. However, current solutions can be expensive and complex, which makes them difficult for many organizations to deploy. This creates a specific market opportunity for new scalable, subscription-based services that use satellite and aerial imagery to serve hundreds of smaller utilities and cooperatives.

Capital Movements and Startup Signals

The flow of venture capital into geospatial startups shows both opportunity and gaps. Felt raised $15 million dollars led by Energize Capital to build a collaborative mapping platform. Pano AI raised $44 million to scale early wildfire detection infrastructure led by Giant Ventures. Meteomatics secured $22 million to scale its weather intelligence business led Armira Growth. Pixxel raised a total of $95 million to expand hyperspectral satellite imaging. Albedo has raised nearly $100 million to deploy very low orbit high resolution satellites. Rhizome raised $6.5 million to build local climate resilience planning platform for grid infrastructure. Kapta raised $5 million to develops and operate spaceborne radar technology to provide low-latency, high-resolution geospatial intelligence.

These financings demonstrate investor appetite, but they also highlight a gap. Much of the capital is flowing into mid stage or infrastructure-heavy plays. There is less support for the earliest-stage ventures spinning out of research labs and engineering groups. These companies often need only a few hundred thousand dollars to build pilots, obtain security reviews, or access enterprise data. Without this catalytic capital, many promising ideas never leave the lab.

Investment Framework: Where Early-Stage Capital Matters Most

Early-stage investment is particularly powerful in this sector because the barriers to adoption are organizational rather than purely technical. Utilities need tools that plug directly into workflows, produce regulator-ready evidence, and can be adopted without large IT buildouts. This means that small, targeted investments can have an outsized impact if they enable a company to pass security screening, complete an enterprise pilot, or integrate with ESRI or SAP.

The most promising entry points are those where urgency is highest. Interconnection delays cost developers millions of dollars per project. Wildfire-driven shutoffs create political and regulatory backlash. Resilience filings are becoming mandatory requirements tied to federal funding. Companies that target these pain-points have a path to fast adoption.

Risk mitigation for investors requires a disciplined approach. Startups must start with a single high urgency use case rather than trying to boil the ocean. They must provide pre built connectors to incumbent systems rather than asking utilities to rebuild workflows. They must embrace transparency so regulators can audit results. And they must control costs by combining open data with selective premium feeds rather than building fully proprietary datasets from scratch.

Conclusion

The case for investing in geospatial intelligence for grid resilience rests on three pillars. Demand is real and growing, driven by climate impacts and regulatory pressure. The competitive landscape is fragmented, leaving room for focused solutions that combine depth, integration, and ease of adoption. Early-stage capital can unlock this opportunity by helping startups cross the credibility gap with utilities and regulators.

The vision is of a climate-ready geospatial stack that is workflow native, utility-grade, and easy to adopt. This stack would embed location intelligence directly into the decisions that matter most: where to build, how to protect, and when to intervene. It would allow utilities to anticipate hazards, regulators to trust evidence, and developers to accelerate interconnection. In short, it would serve as the nervous system of a resilient grid in a climate-volatile world.

Add a company: contribute to the open map here!